Dataset¶

Dataset contains digital recordings from da Vinci Xi robotic system, which is integrated the binocular endoscope, with a diameter of 8 mm (Intuitive Surgical Inc.). Two lenses—0° or 30°— were used. During different stages of the operation, the 30° lens can be used either looking up or down to improve visualisation. The videos used for this challenge are monocular.

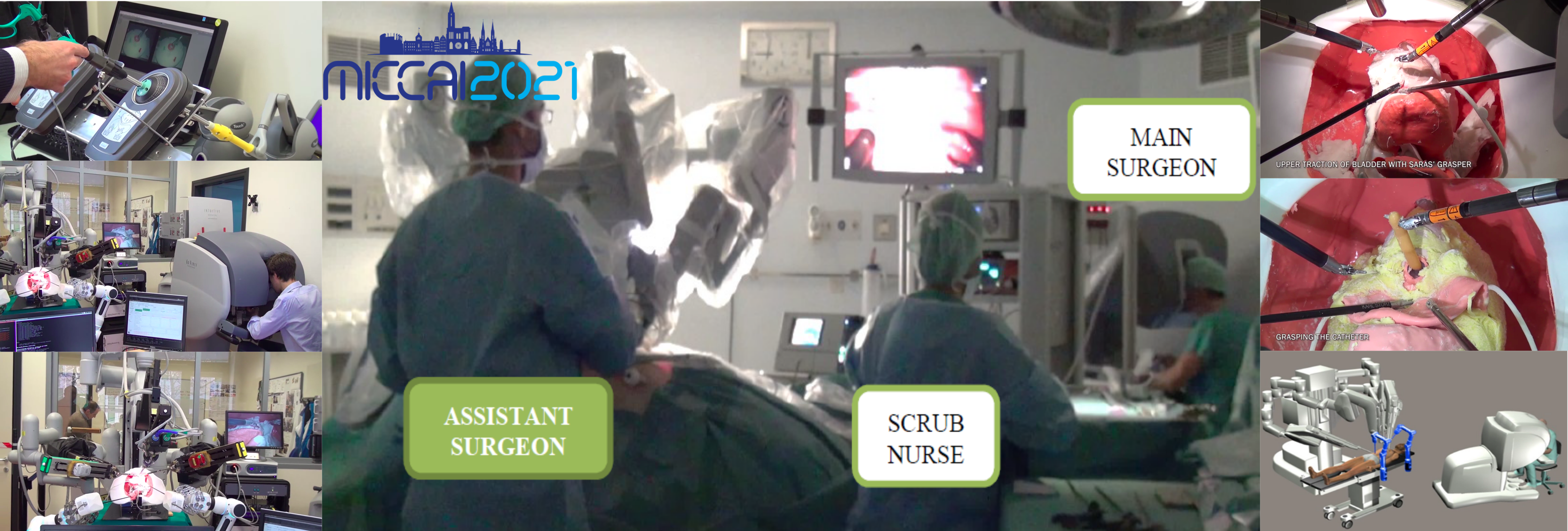

The dataset contains two sub-datasets: MESAD-Real and MESAD-Phantom. MESAD-Real represents the prostatectomy procedures recorded on human patients. Dataset contains four sessions of complete prostatectomy procedure performed by expert surgeons on real patients. The patients' consent was obtained for the recording as well as the distribution of the data. More details can be found in the SARAS ethics and data compliance document available at the link: Here. MESAD-Phantom is also designed for surgeon action detection during prostatectomy, but is composed of videos captured during procedures on artificial anatomies ('phantoms') used for the training of surgeons, and specifically designed for the SARAS demonstrators.

MESAD-Real comprises 21 action classes and MESAD-Phantom contemplates a smaller list of 14 action classes. Both the datasets share 11 action classes. List of all the action classes is provided below.

List of action classes in MESAD-Real:

CuttingMesocolon, PullingVasDeferens, ClippingVasDeferens, CuttingVasDeferens, ClippingTissue, PullingSeminalVesicle, ClippingSeminalVesicle, CuttingSeminalVesicle, SuckingBlood, SuckingSmoke, PullingTissue, CuttingTissue, BaggingProstate, BladderNeckDissection, BladderAnastomosis, PullingProstate, ClippingBladderNeck, CuttingThread, UrethraDissection, CuttingProstate, PullingBladderNeck

List of action classes in MESAD-Phantom:

PullingVasDeferens, CuttingVasDeferens, PullingSeminalVesicle, CuttingSeminalVesicle, PullingTissue, CuttingTissue, BladderNeckDissection, BladderAnastomosis, PullingProstate, UrethraDissection, GraspingCatheter, MovingDownBladder, PassingNeedle, PullingBladderNeck

The images below show sample annotations from MESAD-Real, annotated action on real patients undergoing prostatectomy.¶

The images below show sample annotations from MESAD-Phantom, annotated action on real patients undergoing prostatectomy.¶

Dataset structure¶

The final dataset is divided into three sets: train, validation and test. Each of these sets will contain samples from both tasks. Training set for MESAD-Real contains 23,366 images and training set for the MESAD-Phantom contains 22,609 images. Validation sets for both MESAD-Real and MESAD-Phantom contain 2,024 and 2,285 images, respectively. The test set will only be released during the evaluation phase. The Test to Val sample ratio for both datasets is 4:1. Please make sure that the validation set is not part of your training otherwise the submission can be disqualified.

Both of the datasets contain images with a resolution [720 x 576]. MESAD-Real contains 21 action classes and MESAD-Phantom contains 14 action classes. There are 11 action classes that exist in both datasets. Released dataset zip files for both the datasets contain training (train) and validation sets (val). Each set contains images and annotations directories with images and their respective annotations.

For each image with the name realX_frame_Y.jpg, there are two tsv files. The first file (realX_frame_Y.bboxes.tsv) contains the bounding boxes for action classes and second file (realX_frame_Y.bboxes.labels.tsv) contains the corresponding labels. Each row in the tsv file is a target bounding box with the label in the corresponding row of label file. Bounding box contains four values representing: x1, y1, x2, y2, exactly in the same order. (x1, y1) are the coordinates of the top left corner of the bounding box while (x2,y2) represent the bottom right corner of the bounding box, respectively.

Please download the challenge dataset from the Download tab.

Citation¶

If you find this dataset useful in your research or have participated in the associated challenges, please cite the following publication:

- Bawa, Vivek Singh, et al. "The SARAS Endoscopic Surgeon Action Detection (ESAD) dataset: Challenges and methods." arXiv preprint arXiv:2104.03178 (2021).

- Bawa, Vivek Singh, et al. "ESAD: Endoscopic Surgeon Action Detection Dataset." arXiv preprint arXiv:2006.07164 (2020).

Licensing¶

This dataset is licensed by a CC BY-NC-SA 4.0 license.